For the next several weeks, I’m going to write about some basics of artificial intelligence. AI has been around for decades, but has become particularly popular during the last 20 years thanks to advances in computing. In short, artificial intelligence aims to use computers to solve complex problems quicker and more accurately than human can. Early AI was far different than what we have today. Typically, early AI systems would use complex logic programmed into the system to solve problems. Examples include Dijkstra’s Algorithm or the logic programmed into most games. Modern systems, however, are capable of actually learning for themselves given enough data.

Distance Algorithms

The first set of algorithms necessary to understand AI are distance algorithms. These algorithms are used to determine how close a system is to the right answer. This is necessary when an AI system is learning so that it knows how far off the answer it is. The three main distance algorithms are Euclidian, Manhattan, and Chebyshev. Euclidian distance measures distance as a straight line “as the crow flies” between points on a grid. Manhattan distance travels along one axis and then another, like a taxi traversing New York City. Finally, Chebyshev distance travels like a King on a chessboard alternating between each axis as it gets closer to the target.

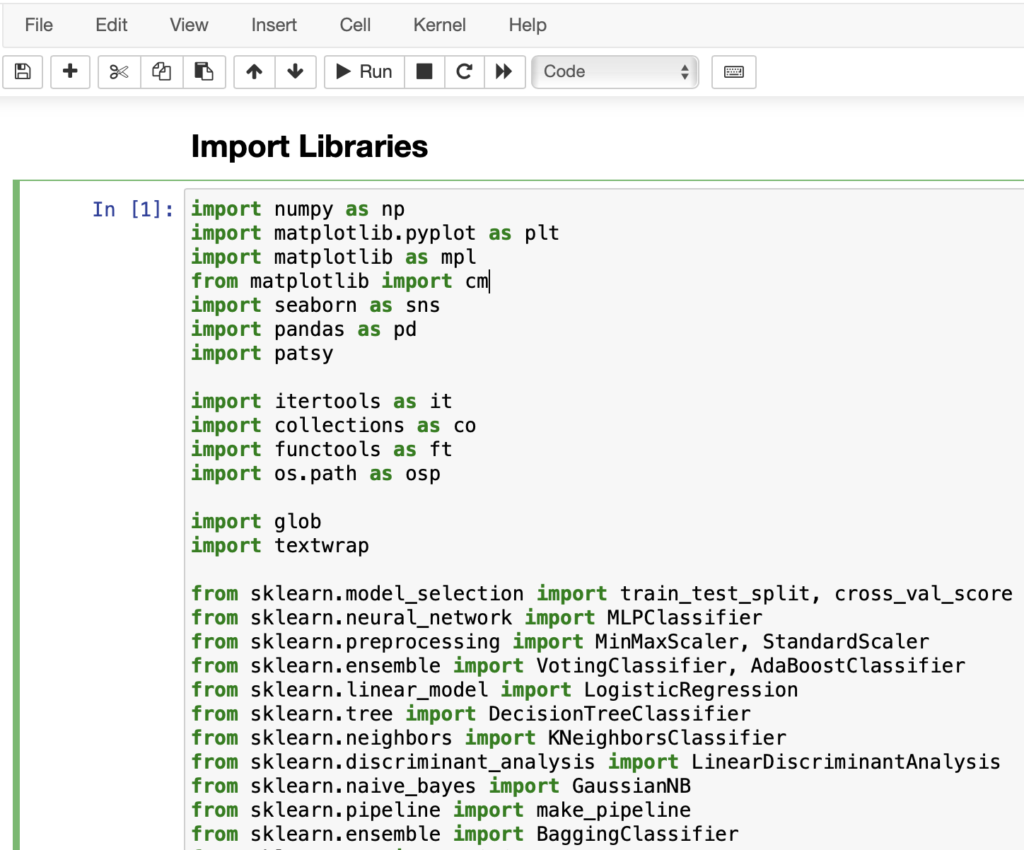

In each of the code snippets below, written in Java, two vectors are passed in – v1 and v2 – where each vector represents a data point. In each instance, the size of the vector would determine the dimensionality of the data. For example, a float[2] would be a 2-D vector which could be plotted on a cartesian plot.

Euclidian Distance Algorithm

public static float euclidean(final float[] v1, final float[] v2) {

float sum = 0;

for(int i = 0; i < v1.length; ++i) {

sum += (v1[i] - v2[i]) * (v1[i] - v2[i]);

}

return (float)Math.sqrt(sum);

}

In the above code, we iterate through two arrays of floating point numbers and then sum the squares of the differences. Finally, return the square root to determine the distance.

Manhattan Distance Algorithm

public static float manhattan(final float[] v1, final float[] v2) {

float sum = 0;

for(int i = 0; i < v1.length; ++i) {

sum += (float)Math.abs(v1[i] - v2[i]);

}

return sum;

}

For the Manhattan distance, we calculate and return the sum of the absolute values of the differences.

Chebyshev Distance Algorithm

public static float chebyshev(final float[] v1, final float[] v2) {

float result = 0;

for(int i = 0; i < v1.length; ++i) {

float d = Math.abs(v1[i] - v2[i]);

result = Math.max(d, result);

}

return result;

}

Finally, in the Chebyshev algorithm, the value is the maximum dimension in any direction.